By CIPESA Writer |

Uganda is embracing the opportunities offered by Artificial Intelligence (AI) and Digital Public Infrastructure (DPI) as drivers of national development. Both promise efficiency, improved service delivery, financial inclusion, and economic growth. However, as the country advances its digital transformation interests, questions linger on the adequacy of safeguards for citizens especially where business and rights intersect.

These questions were at the centre of a High-Level Multi-Stakeholder Dialogue on Business and Digital Rights convened by the Collaboration on International ICT Policy for East and Southern Africa (CIPESA) on May 7, 2026, under the Advancing Respect for Human Rights by Businesses in Uganda (ARBHR) project, supported by Enabel and the European Union (EU). The dialogue brought together 81 individuals representing government officials, civil society actors, private sector representatives, researchers, and digital innovators to reflect on the growing recognition that digital transformation is not simply a technical process, but also a governance and human rights issue that demands transparency and accountability.

The discussions at the dialogue revealed a tension between innovation and human rights. Systems such as digital identity (ID), payment platforms, and data-sharing frameworks centralise enormous amounts of personal data and are reshaping power relationships between citizens, the government, and corporations.

Participants noted that in the absence of strong governance frameworks, these systems can enable exclusion, surveillance, and misuse of personal information. Further, concerns were raised about fragmented systems across government agencies, weak interoperability, and limited public awareness regarding how personal data is collected, stored, and shared.

Meanwhile, as emerging technologies such AI take hold in the country, the Uganda National AI Landscape Assessment positions AI as a key digital technology driver to drive economic growth.

However, the Assessment documents the absence of a dedicated AI policy and regulatory framework, a shortage of AI skills, and insufficient collaboration between academia and the technology sector. Similarly, like its counterpart governments across Africa, Uganda is increasingly investing in DPI systems including digital ID and payment systems, as well as data exchange frameworks. DPI is being positioned as a key pillar of digital transformation strategies across Africa. However, DPI systems remain heavily reliant on public data and algorithmic decision-making. Thus, if designed and deployed without sufficient citizen participation, independent oversight, legal safeguards, and alignment with the public interest, they risk becoming tools of exclusion, exploitation, and foreign dependency.

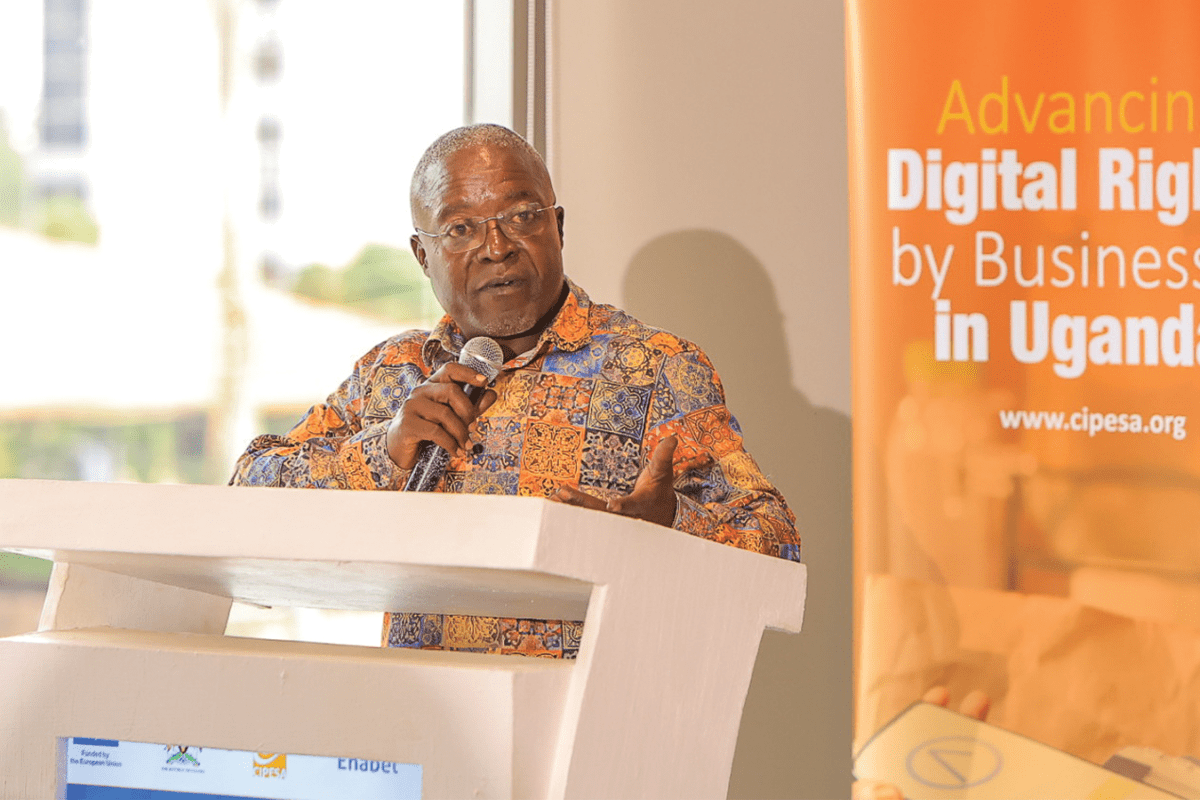

Various efforts related to the adoption of emerging technologies are underway. Ambrose Ruyooka, the Assistant Commissioner at the Ministry of ICT and National Guidance, Uganda noted that the Ministry is taking a cautious approach to regulation by prioritising standards, policy guidance, and institutional learning before introducing binding laws. This includes efforts to domesticate the UNESCO Recommendations on the Ethics of AI and a Readiness Assessment process. The dialogue also came on the heels of the Ministry’s call for stakeholder input to the National AI and Emerging Technologies Strategy – signaling a growing policy focus on responsible digital transformation.

Further he stressed that in the midst of AI, stakeholders should not be “passive consumers” of the digital economy but actively “participate in shaping it” while pointing out that participation requires local technical capacity to “build, operate and audit” systems such as AI and DPI systems independently.While government efforts are laying the foundation for AI governance, businesses also have an obligation to innovate responsibly and adopt robust human rights due diligence processes to support regulatory compliance and foster trust and sustainability.

At a broader level, the dialogue demonstrated how digital rights are increasingly intertwined with economic rights and social justice. As a result, corporate responsibility can no longer be limited to traditional labour or environmental concerns. Companies are now expected to consider how their digital operations affect privacy, equality, freedom of expression, and access to information.

This shift is especially significant for Uganda’s small and medium enterprises (SMEs), many of which are digitising rapidly but often lack the resources and expertise needed to manage cybersecurity and data effectively.

Presentations from implementing partners, including the Private Sector Foundation Uganda (PSFU), Evidence and Methods Lab (EML), Wakiso District Human Rights Committee (WDHRC), Boundless Minds, and Girls for Climate Action (G4CA), highlighted both the scale of the challenge and the potential for practical intervention. Partner interventions on digital rights and cybersecurity are strengthening awareness and practices among entities – both rural and urban.

The European Union’s (EU) Commitment to Human Rights in Business

Laurianne Comard, Programme Officer at the EU Delegation to Uganda, noted that the EU and its member states are currently among Uganda’s largest investors in the private sector, with over 1.4 billion euros deployed to foster sustainable economic growth and high-value exports. Specifically, she stated that the EU supports Uganda’s National Action Plan (NAP) on Business and Human Rights with over 20 billion Uganda Shillings, with a specific focus on strengthening human rights practices in business operations, particularly around labour standards and women’s rights.

Course-Correcting on Inclusion

Participants also noted that public participation in digital governance remains limited. Several civil society actors argued that consultations around national AI strategy have not been broad enough, particularly for rural communities, labour unions, youth groups and persons with disabilities. Frameworks developed without broad public engagement risk lacking legitimacy and failing to address the lived realities of those most affected.

The dialogue also reflected on the NAP on Business and Human Rights and the consultative processes underpinning its evaluation and development of NAP II. Lydia Nabiryo, Assistant Commissioner at the Ministry of Gender, Labour and Social Development, acknowledged that the government is actively working to broaden participation in the NAP’s revision.

Her remarks were a candid recognition that the first NAP, while a significant milestone, left representational gaps, and that those gaps are now being deliberately addressed. She noted, “If you have noticed this time round, we are having a more inclusive dialogue with stakeholders that were not necessarily represented in the first NAP. So, not only is the government evaluating, but we’re also course correcting.”

Participation should not only be limited to policy processes. Shane Ssenyonga, an innovator, pointed out the need for collaborative spaces that support entrepreneurs and businesses to build scalable solutions that are responsive to social, cultural, and economic realities.

Recommendations for Action

The dialogue called for stronger human rights safeguards and access to remedy within digital transformation strategies and business operations. The strategies should be in harmony with existing digital laws and policies and strengthen oversight and enforcement by relevant institutions. For businesses, adoption of forward looking internal policies and risk management practices was emphasised to ensure trusted deployments and reduce barriers to uptake. Advocacy, documentation, and digital literacy interventions remain critical to public education and compliance monitoring.