Announcement |

The Collaboration on International ICT Policy for East and Southern Africa (CIPESA) is calling for proposals to support digital rights work across Africa.

This call for proposals is the 10th under the CIPESA-run Africa Digital Rights Fund (ADRF) initiative that provides rapid response and flexible grants to organisations and networks to implement activities that promote digital rights and digital democracy, including advocacy, litigation, research, policy analysis, skills development, and movement building.

The current call is particularly interested in proposals for work related to:

- Data governance including aspects of data localisation, cross-border data flows, biometric databases, and digital ID.

- Digital resilience for human rights defenders, other activists and journalists.

- Censorship and network disruptions.

- Digital economy.

- Digital inclusion, including aspects of accessibility for persons with disabilities.

- Disinformation and related digital harms.

- Technology-Facilitated Gender-Based Violence (TFGBV).

- Platform accountability and content moderation.

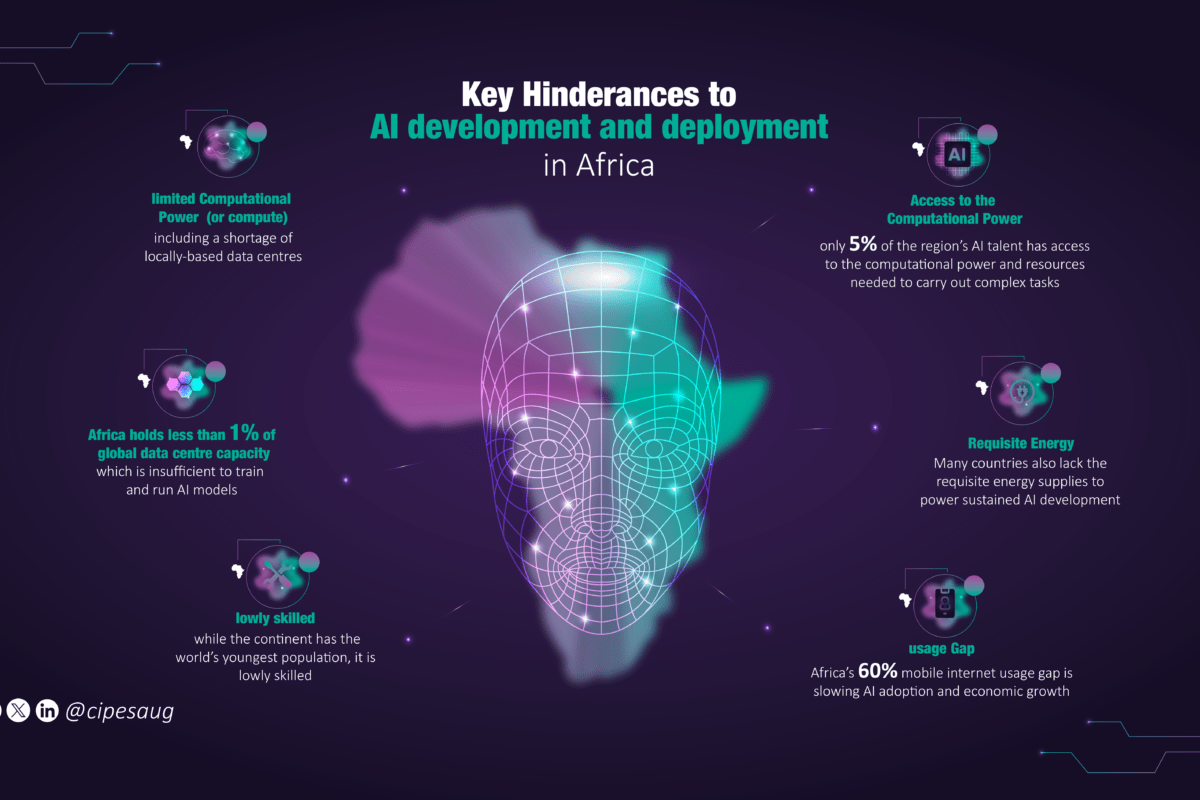

- Implications of Artificial Intelligence (AI).

- Digital Public Infrastructure (DPI).

Grant amounts available range between USD 5,000 and USD 25,000 per applicant, depending on the need and scope of the proposed intervention. Cost-sharing is strongly encouraged, and the grant period should not exceed eight months. Applications will be accepted until November 17, 2025.

Since its launch in April 2019, the ADRF has provided initiatives across Africa with more than one million US Dollars and contributed to building capacity and traction for digital rights advocacy on the continent.

Application Guidelines

Geographical Coverage

The ADRF is open to organisations/networks based or operational in Africa and with interventions covering any country on the continent.

Size of Grants

Grant size shall range from USD 5,000 to USD 25,000. Cost sharing is strongly encouraged.

Eligible Activities

The activities that are eligible for funding are those that protect and advance digital rights and digital democracy. These may include but are not limited to research, advocacy, engagement in policy processes, litigation, digital literacy and digital security skills building.

Duration

The grant funding shall be for a period not exceeding eight months.

Eligibility Requirements

- The Fund is open to organisations and coalitions working to advance digital rights and digital democracy in Africa. This includes but is not limited to human rights defenders, media, activists, think tanks, legal aid groups, and tech hubs. Entities working on women’s rights, or with youth, refugees, persons with disabilities, and other marginalised groups are strongly encouraged to apply.

- The initiatives to be funded will preferably have formal registration in an African country, but in some circumstances, organisations and coalitions that do not have formal registration may be considered. Such organisations need to show evidence that they are operational in a particular African country or countries.

- The activities to be funded must be in/on an African country or countries.

Ineligible Activities

- The Fund shall not fund any activity that does not directly advance digital rights or digital democracy.

- The Fund will not support travel to attend conferences or workshops, except in exceptional circumstances where such travel is directly linked to an activity that is eligible.

- Costs that have already been incurred are ineligible.

- The Fund shall not provide scholarships.

- The Fund shall not support equipment or asset acquisition.

Administration

The Fund is administered by CIPESA. An internal and external panel of experts will make decisions on beneficiaries based on the following criteria:

- If the proposed intervention fits within the Fund’s digital rights priorities.

- The relevance to the given context/country.

- Commitment and experience of the applicant in advancing digital rights and digital democracy.

- Potential impact of the intervention on digital rights and digital democracy policies or practices.

The deadline for submissions is Monday, November 17, 2025. The application form can be accessed here.